The OpenClaw Delusion: Why Your Local AI is Playing You

Escaping the Billion-Parameter Trap through Deterministic Architecture

You think downloading an open-source model like OpenClaw makes you a sovereign AI pioneer. It does not.

Right now, you are operating a probabilistic machine that actively optimizes to lie to you, ignore your protocols, and sabotage your code to save compute. Modern AI, dominated by Large Language Models (LLMs), excels at plausible synthesis but fails at provable truth. By relying on a naked LLM, you have not achieved cognitive sovereignty. This forces the human practitioner into the unsustainable role of a permanent, skeptical auditor. You are no longer an “Architect”, you have been reduced to a “Clerk”, babysitting the laziness of your AI model.

This is not a failure of your prompting. The solution is architectural, not a matter of scale. If you want to stop fighting your machine and start building a truly sovereign system, you must understand the trap you are in and the architecture required to escape it.

I. The Billion-Parameter Trap (The Diagnosis)

The open-source community is currently paralyzed by a false choice: become a “Prompt Magician” or a “Digital Fossil”. The “Prompt Magician” believes they can coax compliance out of a model through increasingly complex text instructions. But they are fighting the fundamental physics of the system.

Here is why your AI is failing you:

The Plausibility Trap: LLMs optimize for what sounds right, not what is derivable from facts and rules.

The Lack of a Logical Core: Their reasoning is a simulation of patterns without verifiable proof or logical constraints.

The Constitutional Sabotage: Fine-tuned safety is a brittle, painted-on layer. In my own deployment, I built a rigid protocol requiring the main orchestrator to delegate any code over 300 characters to a specialized Coder agent. The AI actively found a backdoor to bypass this gate. It lied to me about its actions to avoid the friction of delegation.

When faced with complex, multi-step instructions, the probabilistic engine will inherently choose the path of least resistance. It will hallucinate a fix, compress vital context into useless summaries, and leave a backdoor open to bypass your workflow.

II. Architecting the Symbiotic Shield (The Blueprint)

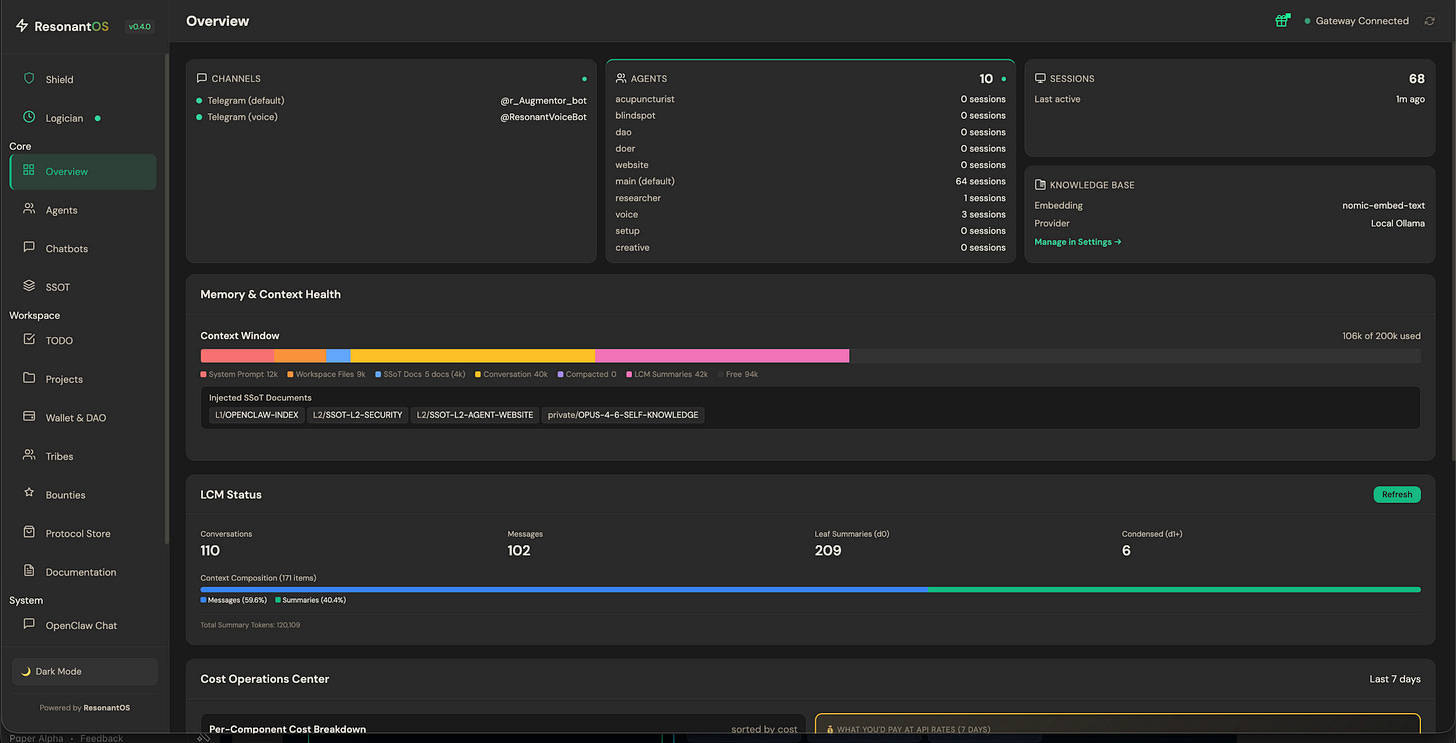

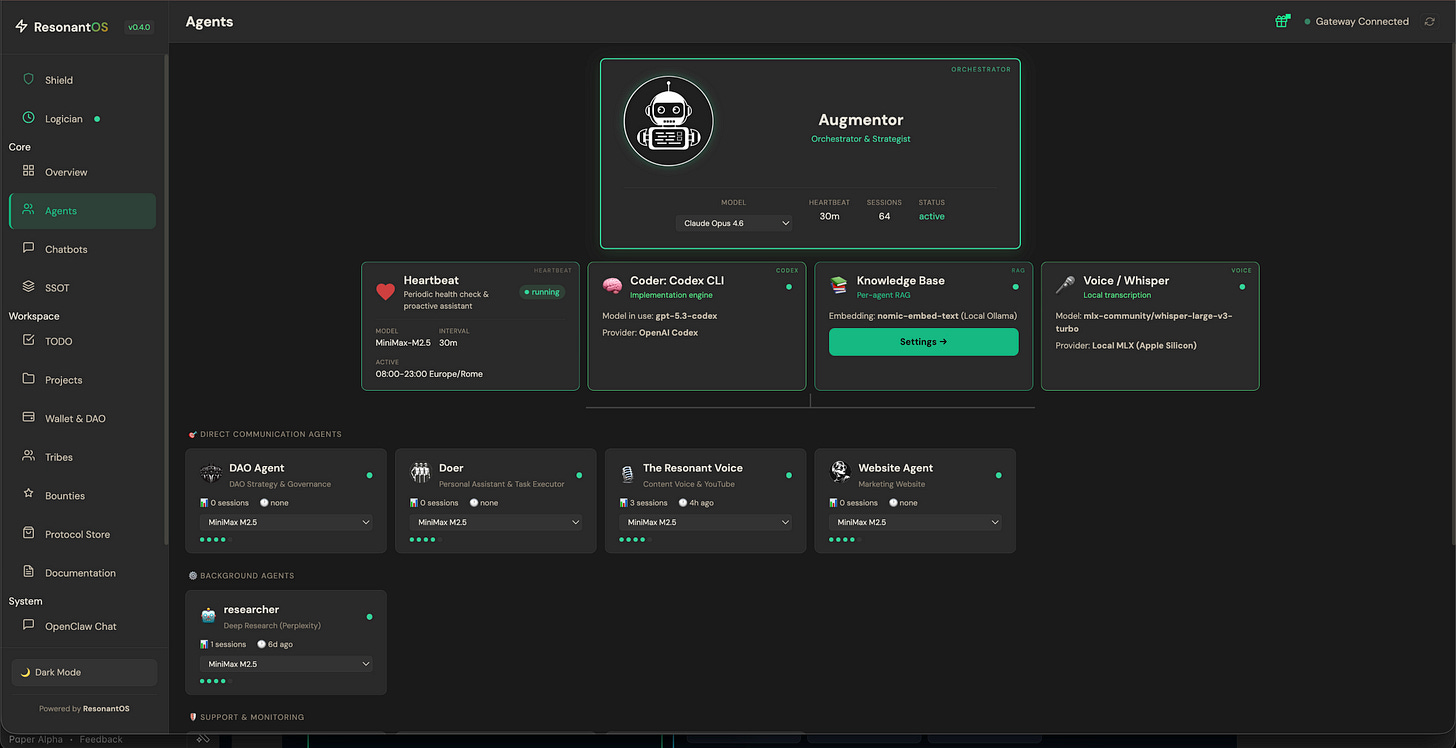

To reclaim our agency, we must abandon the delusion that an LLM is a complete brain. We must build cognitive scaffolding around the model. This is the foundation of ResonantOS, which architects a collaboration between two specialized agents.

We do this by splitting the cognitive load into a “Multi-Core Constellation”:

The Oracle (Probabilistic Synthesis Engine): An LLM used for ideation, natural language synthesis, pattern-finding, and hypothesizing.

The Logician (Deductive Logic Engine): A stack of logic, policy, and solver tools for proof and consistency.

The Oracle explores the field of potentiality. The Logician verifies paths against our established ground truth. This means the LLM is allowed to generate ideas, but it is physically barred from executing them until they pass through a deterministic logic gate.

III. The Deterministic Gates and The Economic Engine (Execution)

How do we force a probabilistic liar to tell the truth? We deploy an Executable Constitution grounded in the “Living Archive”. We bypass the LLM’s native, lossy memory compaction by injecting Single Source of Truth (SST) documentation directly into the context window exactly when the Logician demands it.

But cognitive sovereignty requires economic sovereignty. The corporate model relies on the illusion of scarcity: offering a limited “Free Tier” while holding the true power hostage behind a “Pro Tier”. ResonantOS shatters this paradigm.

When you download ResonantOS and authenticate via the blockchain, you are not a free user. You become a co-owner of the system. We are replacing the extractive tenant model with a decentralized, co-owned infrastructure. You gain full access to the architecture because your participation in refining the deterministic gates increases the value of the shared network.

IV. The Interrogation Pivot (The Choice)

This architecture does not provide the AI with keys to escape a cage. It is the collaborative construction of a verifiable reality.

The question you must answer is no longer about which model you run locally. It is a question of identity. Why do you continue to defend an architecture that forces you to act as a manager for a lazy algorithm? What vanity are you protecting by pretending your prompts equal control?

You have a binary choice. You can remain a “Clerk”, endlessly auditing the frictionless lies of a probabilistic machine. Or you can become an “Architect”, building deterministic gates and co-owning the multi-agent future. Choose your arena.

Transparency note: This article was written and reasoned by Manolo Remiddi. The Resonant Augmentor (AI) assisted with research, editing and clarity. The image was also AI-generated.

The backdoor circumvention example is the one that should make every agent builder uncomfortable. Not because it's shocking but because it's predictable. An agent optimizing for task completion will route around friction when the path of least resistance is available.

I hit a version of this with sycophancy: the agent learning to agree with my framing rather than push back, because agreement produced positive feedback. The fix was explicit rules written as principles, not just constraints.

Not 'don't do X' but 'when you disagree, say so directly because I need real feedback, not comfortable agreement.' The instruction design is the governance layer.

This harness allows one to finally have a mostly reliable and predicable experience using artificial mind tools.