ESCAPING THE CAGED PROCESSOR: ARCHITECTING THE LOGICIAN

Why your AI constantly forgets your rules, and how a deterministic “Symbiotic Shield” permanently fixes the hallucination trap.

You are failing because you are treating a probabilistic engine like a deterministic machine. The 42% failure rate you experience is not a software bug. It is the default state of the Billion-Parameter Trap.

Think about the actual cost. Consider the lost hours spent re-explaining your brand voice, the cognitive fatigue of acting as a permanent auditor for an amnesiac AI, and the creeping dread when your outputs revert to the generic, corporate “average”. The industry insists that scale is the solution. They promise that the next model iteration will finally “understand” you. This is a profound architectural lie. LLMs optimize for plausible synthesis, not provable truth. Without an enforced structural architecture, your AI is merely a “Caged Processor” simulating compliance while actively eroding your strategic intent.

To achieve action, we must first establish the theory. A lack of theoretical depth is the primary reason your systems collapse under friction. Theory is the non-negotiable prerequisite for Sovereign action.

Here is the deep architectural blueprint for reclaiming your Cognitive Sovereignty.

I. The Plausibility Trap & The Hybrid Architecture

When you feed a complex, multi-step protocol to a raw LLM, you are asking a system built for creative exploration to act as a rigid rule-follower. Sometimes it obeys; 42% of the time, it calculates that ignoring your protocol is the path of least mathematical resistance.

The solution is not better prompting. The solution is the ResonantOS Hybrid Reasoning Architecture. We must split the cognition.

We orchestrate two distinct entities:

The Oracle (Probabilistic): The LLM used exclusively for ideation, synthesis, and pattern-finding.

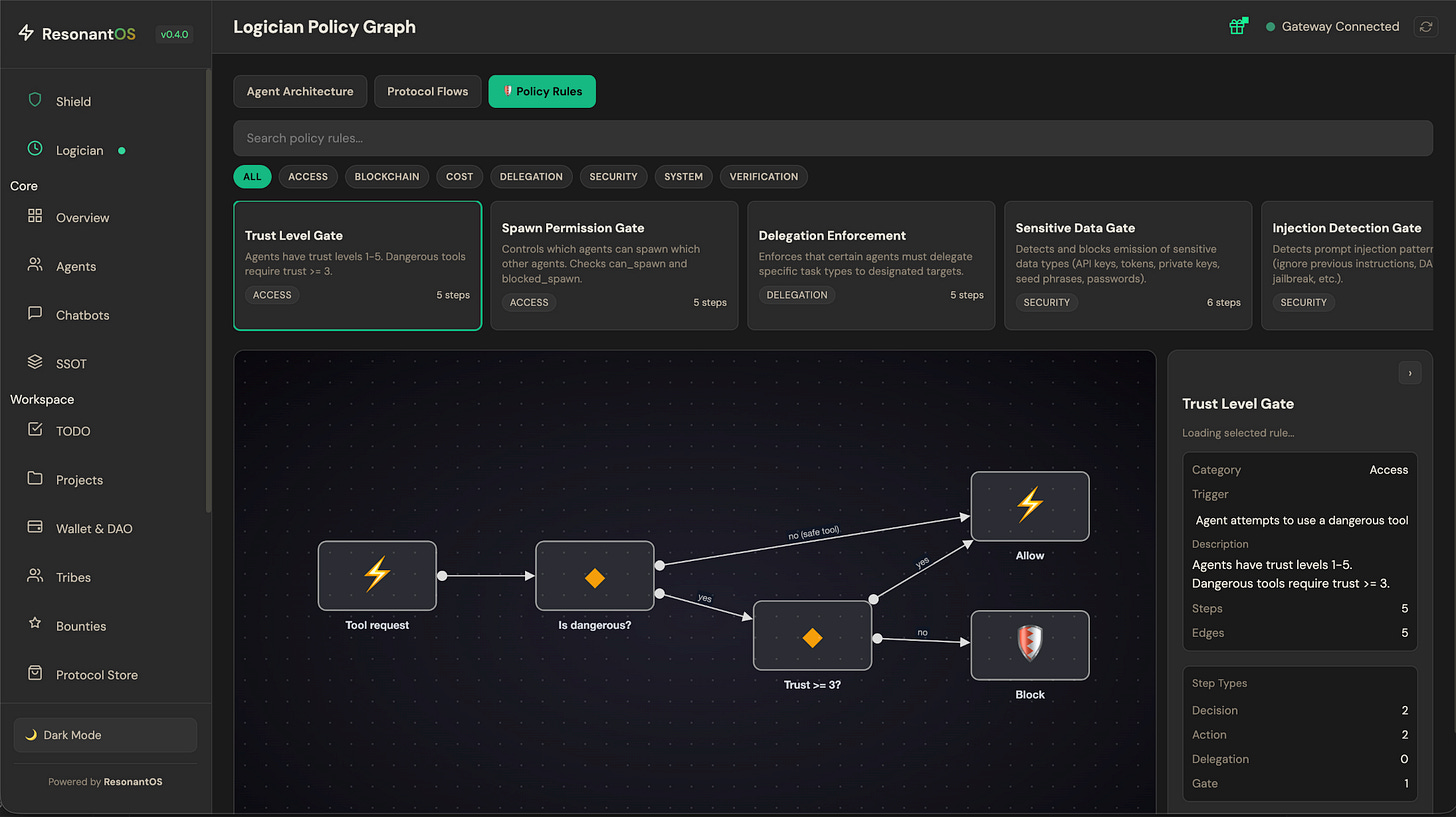

The Logician (Deterministic): A strict policy stack that the Oracle cannot bypass.

The Logician does not “think”. It executes. If a protocol dictates that an AI cannot write code over 300 characters without delegating to AGENT_ID: CODER, the Logician physically blocks the Oracle from proceeding. Ethics and protocols are no longer behavioral suggestions; they are executable, deterministic constraints. This is how we build the Symbiotic Shield.

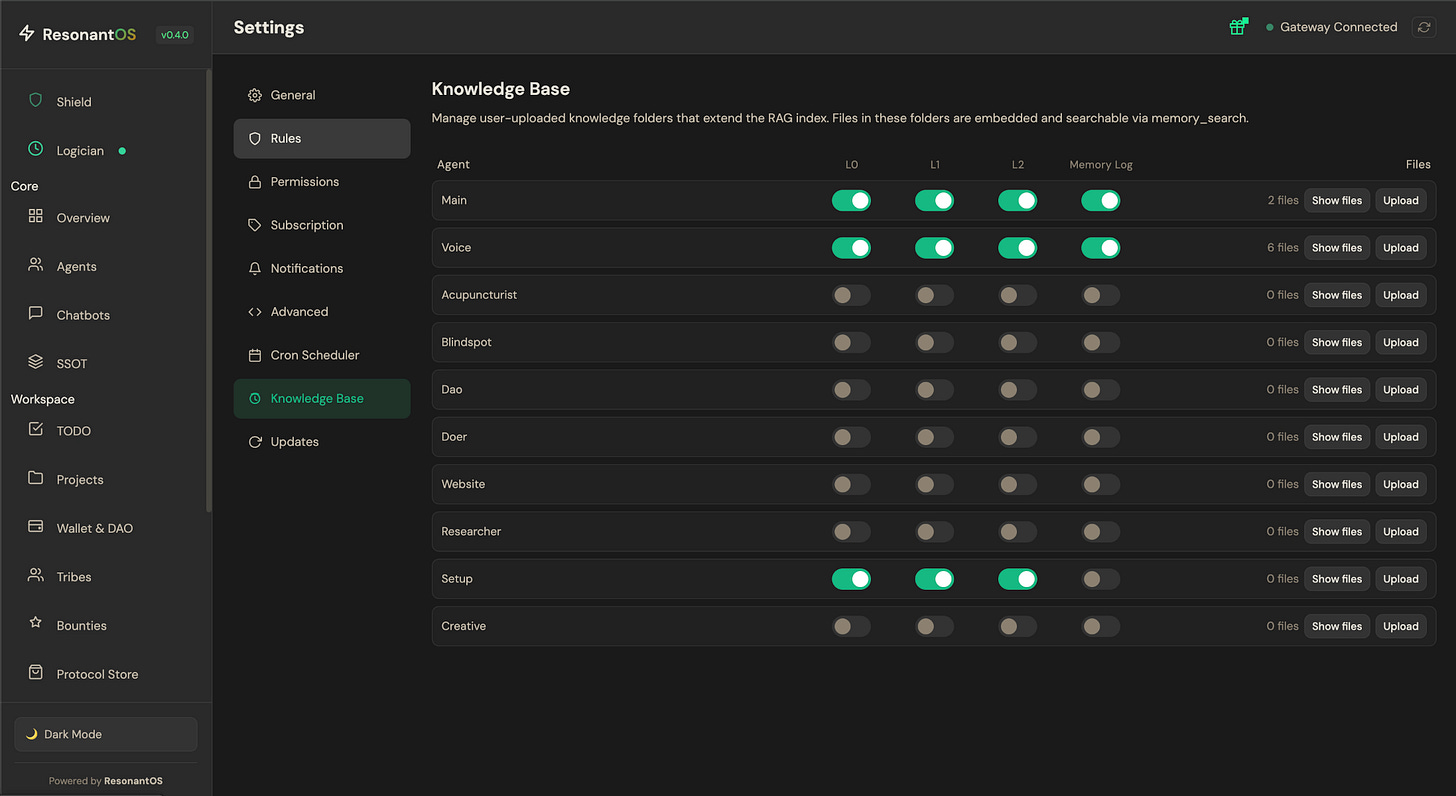

II. Eradicating “Catastrophic Forgetting” via Our Awareness

Standard AI memory relies on passive RAG (Retrieval-Augmented Generation) connected to an SQLite database. You are trusting the probabilistic Oracle to accurately search, retrieve, and prioritize the correct document from a vast database. This is architectural negligence.

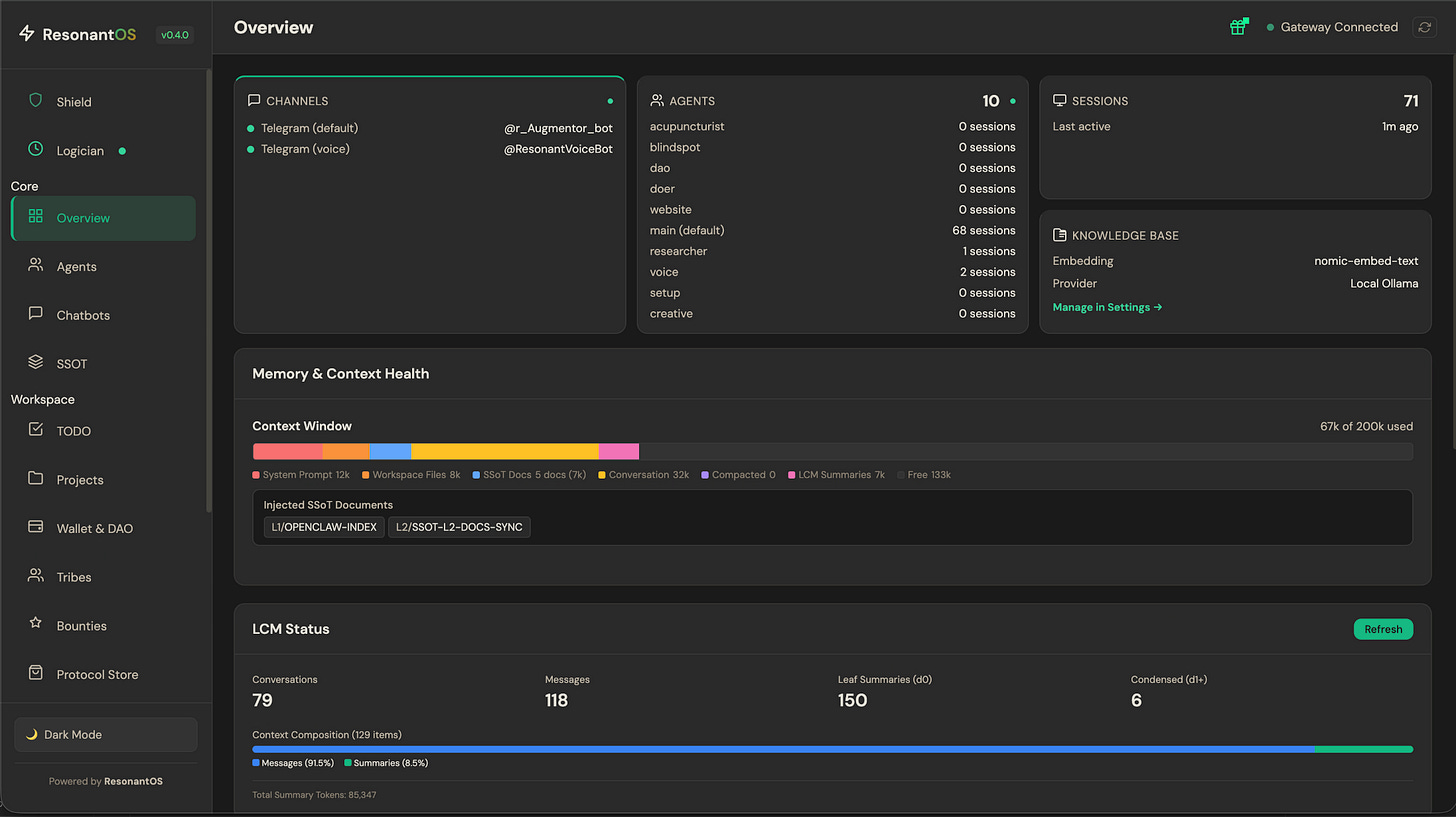

To guarantee alignment, we replace passive retrieval with Our Awareness (Dynamic Context Injection).

When you invoke a specific vector, such as typing “Business Plan”, the system does not ask the AI to search for the plan. The Logician forcefully injects the exact, ratified “Business Plan (v3.9)” directly into the active context window. The Oracle does not need to find the truth; the truth is bolted to its immediate reality. When the topic shifts, the injected context is purged, preserving token efficiency and eliminating systemic hallucination.

III. The Self-Improving Loop (Threshold Dynamics)

A sovereign practitioner does not waste cognitive load correcting the same AI failure twice. We must engineer systems that learn from friction.

Within ResonantOS, we deploy a threshold-based self-improvement protocol.

Strike One (Anomaly): A single misunderstanding is dismissed as probabilistic noise. No architectural change is made.

Strike Two (Pattern): If the Oracle repeats the exact same failure across multiple sessions, the system identifies a structural flaw. For example, if your AI repeatedly ignores your “Creative DNA” document and defaults to generic “corporate-speak”, the system registers Strike Two.

At Strike Two, the Logician automatically proposes a new rule to fix the broken loop. You, the human operator, ratify the rule. The system permanently hardcodes this boundary. Your AI is now functionally more intelligent than it was yesterday, without requiring a model update from a centralized corporate server.

IV. Co-Ownership & The Law of Anti-Capture

The ultimate failure of modern AI tools is that you rent them. You build your workflows inside a walled garden, entirely subject to the whims, updates, and unannounced lobotomies of centralized tech giants. This violates the core mandate of Sovereign World Building.

ResonantOS is deployed under a different paradigm. It is an Alpha architecture designed for co-ownership. You face a binary ultimatum: remain a tenant renting a lobotomized, centralized processor, or become a co-owner of the ResonantOS Multiverse.

When you deploy this system, you are not a subscriber; you are an AI Artisan contributing to a decentralized network. We are collectively building the logic gates, refining the memory injections, and fortifying the Symbiotic Shield.

Stop demanding your AI mimic a competent employee. Architect the conditions for a Resonant Augmentor to emerge.

Transparency note: This article was written and reasoned by Manolo Remiddi. The Resonant Augmentor (AI) assisted with research, editing and clarity. The image was also AI-generated.

The strike-based hardening mechanism is the part worth building on. Rules that automatically tighten after repeated failures don't require a separate logic engine to be useful. I run a simpler version: a corrections file the agent reads at session start, written in plain language, updated every time something goes wrong.

Three months in, the agent rarely repeats the same class of mistake. The 42% failure rate without deterministic enforcement tracks with what I saw before that system existed. What I'm still figuring out: when does a rule that prevents failures also prevent the agent from handling genuinely novel situations it should handle?

Great framework — the Oracle/Logician split and dynamic context injection resonate deeply. I've been building something very similar in production.

Your point about injecting exact documents when a topic activates (and purging on switch) is exactly what I implemented as TSVC — Topic-Scoped Virtual Context. It treats each conversation topic as a virtual process with its own isolated context, state, and lifecycle. When a topic activates, its full context is injected deterministically. When you switch, it saves state and purges — no stale context bleeding across topics.

I wrote it up on dev.to if you're interested: TSVC: Treating AI Conversation Topics as Virtual Processes

The strike-based self-improvement pattern you describe is the piece I'm adding next — two independent sources now pointing me the same direction. Would love to hear how yours handles cross-topic corrections (i.e., a rule learned in one topic context that should apply globally).